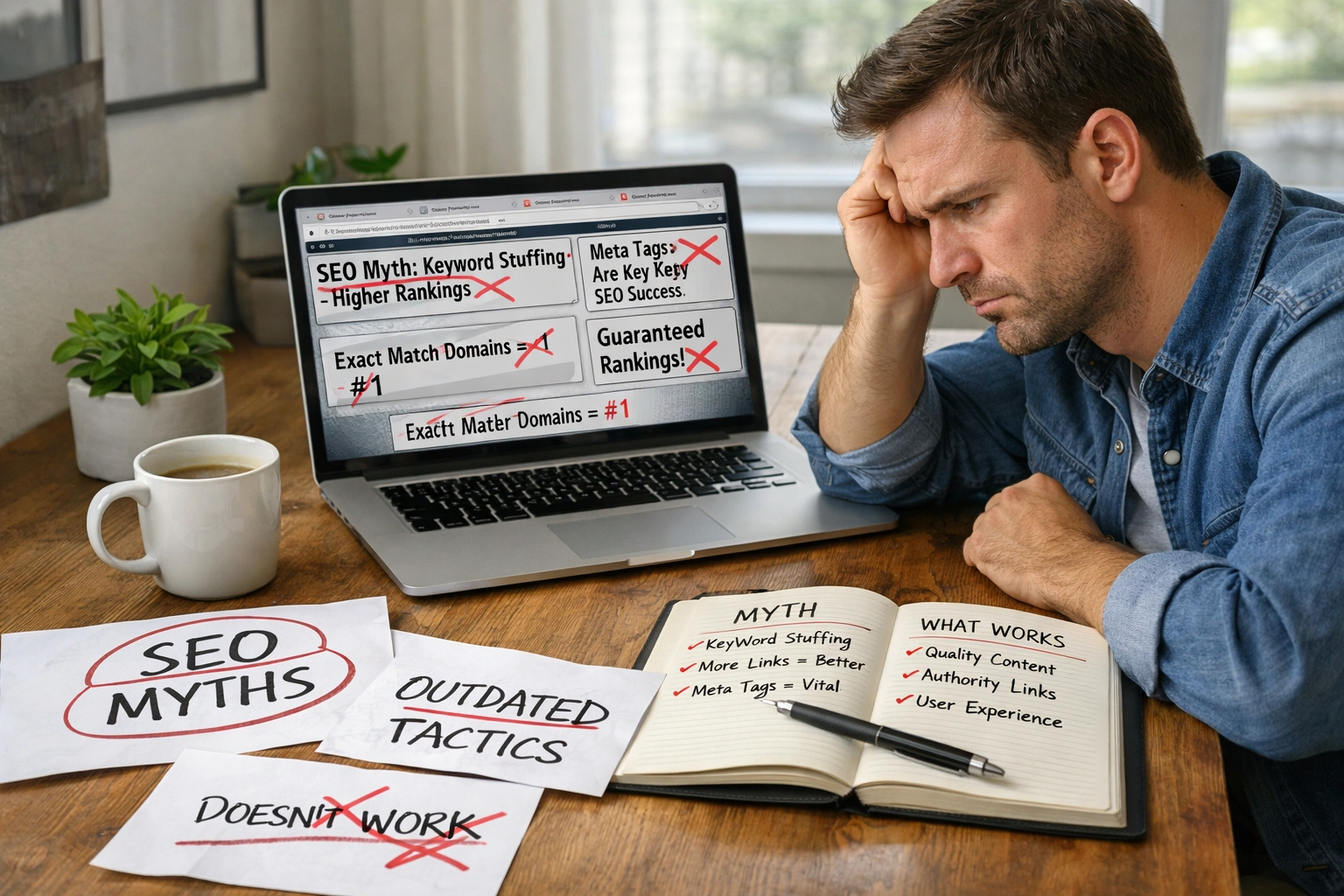

Search engine optimisation fails when content has no clear purpose because Google can’t figure out what problem you’re solving for users. When that happens, the algorithm tracks how people interact with your page. If your content jumps between topics or mixes up search intent, visitors leave, and your rankings suffer.

Frankly, we see this all the time with clients who thought keywords alone would do the trick. Without a defined SEO purpose, you’re basically publishing content that confuses both Google and your audience.

This article covers why clear intent beats keyword stuffing, how search behaviour influences rankings, and what happens when your content strategy lacks focus. Your pages might look perfectly optimised, but without a clear goal, they won’t rank.

Let’s fix that.

When Your Content Strategy Has No Clear Goal

A content strategy without direction results in scattered pages that compete with each other instead of ranking. Drawing from our experience, we’ve seen sites lose about 40% of their traffic in just a few months. This happened when five different blog posts targeted the same keyword with zero coordination (and yes, Google penalises this kind of internal competition).

Let’s break down what happens next.

Your Pages Start Competing Against Each Other

When you create content without mapping search intent first, multiple pages end up targeting the same search queries. For instance, one page tries to sell running shoes while another explains how they work (both using identical keyword phrases).

The result is your own site cannibalises its rankings. And that’s why you lose organic traffic to competitors who planned better.

Google Can’t Figure Out What You’re About

Search engines look for common themes and logical flow across your site. According to recent Australian SEO trends, sites with a clear content strategy in defined business areas consistently outrank scattered competitors.

And when Google can’t categorise your site properly, it avoids ranking you for anything specific.

Traffic Drops Because There’s No Clear Path

Users expect relevant results based on their search behaviour. If your landing page jumps between topics, they leave immediately. Google Analytics captures these quick exits through bounce rates and session times, which shows search engines that your content failed to help people searching for solutions.

As a result, this tanks your rankings while costing you real customers. So how does Google spot this lack of direction in the first place?

How Google’s Search Algorithm Detects Aimless Content

Google’s search algorithm tracks user behaviour like clicks, time on page, and bounces to measure content quality. What does that mean exactly? Well, if someone clicks your page and leaves in ten seconds, Google notices and uses intent classification to score your content.

Google tracks three specific signals:

- Bounce Rate: When users land on your page and immediately return to search results, it tells Google your content missed the mark. This drops your rankings as Google protects user experience.

- Dwell Time: How long someone stays on your page tells Google a lot. From our experience auditing client sites, pages with a clear purpose keep visitors engaged three times longer than scattered content, which directly impacts how Google ranks them.

- Click Patterns: Google tracks which results users choose and if they return to search for better answers. If people consistently skip your site, the algorithm learns your content doesn’t satisfy that search intent and adjusts rankings accordingly.

But knowing Google watches behaviour is only half the puzzle. The real challenge is understanding what type of intent your content should serve in the first place.

Mixing Up Informational Intent and Commercial Intent

You wrote a buying guide, but stuffed it with beginner definitions. Mixing informational intent and commercial intent like this confuses both Google and your audience fast.

Take a common search like “best running shoes for beginners”; someone typing that is ready to compare and buy. Here, if your page spends 800 words explaining what running shoes are, you’ve already lost them.

So, the problem is that each intent type needs its own approach:

- Informational Intent: Users want answers, explanations, or how-to guides without any buying pressure.

- Commercial Intent: People are researching and comparing options before they’re ready to commit.

- Transactional Intent: They’ve made their decision and want to complete a purchase right now.

Trying to serve multiple intents on the same page waters down your focus and confuses search engines about where to rank you.

Believe it or not, we’ve audited sites where every page tried to do both, educate and sell, and ranked for neither. One Brisbane ecommerce client had product pages that read like Wikipedia entries (because nothing says ‘trust me’ like a hard sell in a beginner’s guide). What’s more, Google couldn’t figure out whether to rank them for searches about education or buying.

Ultimately, each page needs one clear purpose aligned with the audience’s intent. We recommend matching your content format to what people searching need, and Google will reward that clarity.

But understanding intent is only half the equation. You also need the right keywords to back it up.

Why Keyword Research Alone Isn’t Enough

Keyword research identifies terms people often search for, but it doesn’t reveal what they want to do once they land. You can rank for high-volume keywords and still get zero conversions if your content doesn’t match user intent.

Our tests revealed something interesting: pages stuffed with high-volume keywords but misaligned intent ranked lower than pages with fewer keywords but a crystal-clear purpose. Here’s why keyword data alone falls short.

- Search Volume Doesn’t Equal Search Intent: A keyword phrase like “running shoes” gets millions of searches monthly. But those searches are split between learning about types, comparing brands, and ready-to-buy shoppers. So targeting volume alone puts you in competition with every intent type instead of serving one audience well.

- Keyword Tools Miss Context Clues: Most keyword research platforms show search volume and difficulty, but they don’t tell you what users expect to find. After multiple trials and errors, we’ve learned that analysing search engine results pages reveals the real intent patterns behind the numbers.

- Intent-First Strategy Beats Keyword-First Strategy: Start by identifying where your audience sits in the marketing funnel. Then find keywords matching that stage. This strategy creates content that converts because it serves a specific need.

Getting intent right first makes keyword research useful. However, your content can still fail if it doesn’t guide people through your marketing funnel.

Ignoring Marketing Funnels in Your Content Marketing

It’s equally important to make sure your content moves people forward.

Blog posts should guide users through awareness, consideration, and decision stages instead of treating every visitor the same. Since someone Googling “what is SEO” isn’t ready to book a consultation yet.

Let’s discuss how each funnel stage works differently.

Awareness Stage

At the top of the marketing funnel, users recognise they have a problem but haven’t figured out how to solve it yet. Your blog posts at this stage should answer their questions without pushing products.

This is where you should focus on education that builds credibility, instead of sales pitches.

Consideration Stage

Once users understand their problem, they start comparing approaches.

From our experience with Brisbane clients, this stage performs best with case studies and detailed how-to content. Because people searching at this level want proof that your solution works before committing to anything.

Decision Stage

Users here know what they need. They’re evaluating specific providers, which means product pages, pricing guides, and testimonials work because commercial intent and transactional intent align.

The bottom line is your content should remove final objections and make the next stage obvious.

So it’s best to map your content to funnel stages, or risk confusing every visitor who lands on your site. Even then, jumping between formats without a plan can ruin your strategy.

Jumping Between Content Formats Without a Plan

Consistent formats help search engines understand your site and build trust with your audience. Publishing a how-to guide one week, a product comparison the next, and a case study after signals you’re throwing everything at the wall (which Google reads as a lack of topical authority).

The problem gets worse when you consider intent. Each format serves a different search intent, so jumping between listicles, long-form guides, and product reviews without purpose leaves search engines confused.

We suggest you pick two or three formats that align with your business goals and stick with them. That consistency tells Google exactly what you do, which improves how you rank.

So how do you fix content that’s already missing a clear purpose?

Define Your SEO Purpose or Watch Your Rankings Suffer

Content without a clear purpose bleeds traffic and revenue every single day. What’s worse, when your pages lack direction, Google pushes them down in search results. The fix starts with defining exactly what problem each page solves and who it serves before you write a single word.

We covered why scattered content hurts rankings, how Google spots aimless pages, and why mixing intent types confuses your audience. The solution is to align every piece of content with your marketing funnel stages to drive real conversions.

At Accuvant Labs Blog, our team specialises in building content strategies that rank. We’ll take you through every stage you need to turn scattered pages into focused assets. Visit us today for proven search engine optimisation tactics.